AGI by October 2025, that’s the trendline…

Of all the the popular benchmarks used today to test models, I personally think the GPQA Diamond set gives us the most insightful look into predicting the achievement of AGI. The test set is described by its creators in their published research paper as:

A challenging dataset of 448 multiple-choice questions written by domain experts in biology, physics, and chemistry. We ensure that the questions are high-quality and extremely difficult: experts who have or are pursuing PhDs in the corresponding domains reach 65% accuracy (74% when discounting clear mistakes the experts identified in retrospect), while highly skilled non-expert validators only reach 34% accuracy, despite spending on average over 30 minutes with unrestricted access to the web (i.e., the questions are “Google-proof”).

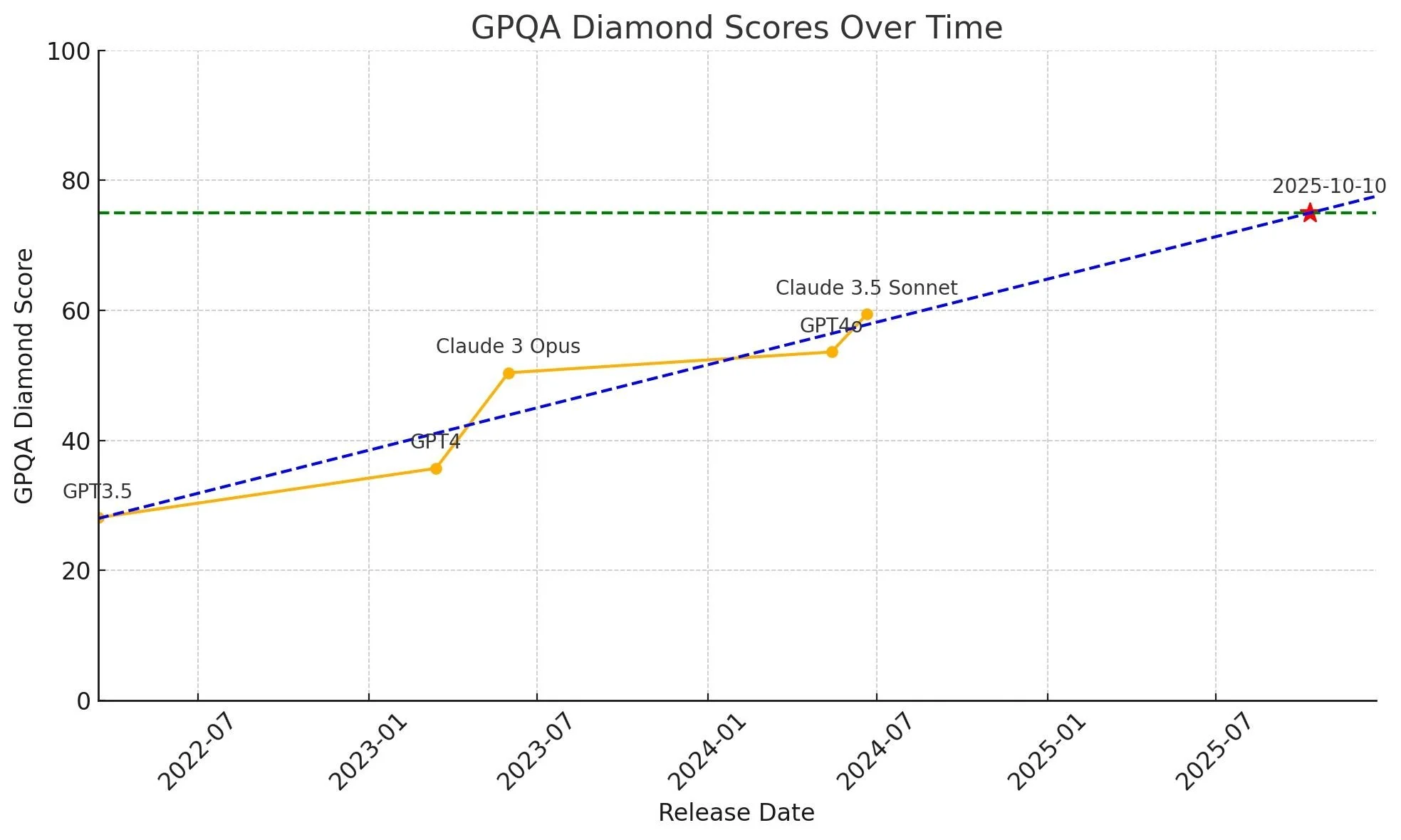

So assuming a score of 75% as a threshold for surpassing most domain experts in some of the most complicated science fields we have today, we simply plot the GPQA scores of existing models (each that were the top performing model in the world upon initial release) against their chronological release dates to establish a trendline.

We got just over a year, and that’s likely a conservative estimate both in time and in performance threshold.